But we need to try it to see what options we have and set benchmarks. There is no absolute answer, maybe JSONB works for you, maybe not. Programming, the same as all other sorts of engineering, is about compromises. Here are some examples of when it could be a bad idea to use JSONB: When you need to filter the data When you need indexes When you need constraints When you need a key-value store When you could use a table When other people need to know what is going on When you need to store a lot of data in one row Conclusion storing JSONB into a table) When you have JSON data and quickly need to do some queries When not to use JSONB Here are some examples of when you can use JSONB: Got NOSQL, but you need some ACID Storing document data - like settings, API queries (HAR format?)… Audit trail Fast-changing data Data with many optional columns/keys (Could inherited tables work?) As an aggregate (e.g. Below is a comparison of table sizes, and a comparison of the time needed for a query, showing which one to use when you need to solve a problem quickly.Ĭheck out the story time at the time 19:40 in the video to think about a possible use case. you can test incoming JSON data tested for required fields, data type, and range. If you are using PostgreSQL, you must pass in an array of objects to match, even if that array only. How is it compared to PostgreSQL especially on query performance. In the real life example sourced by the experiment, you can see a comparison of the data stored in JSON, JSONB, and normal tables. How to read, write, and filter by Json fields.

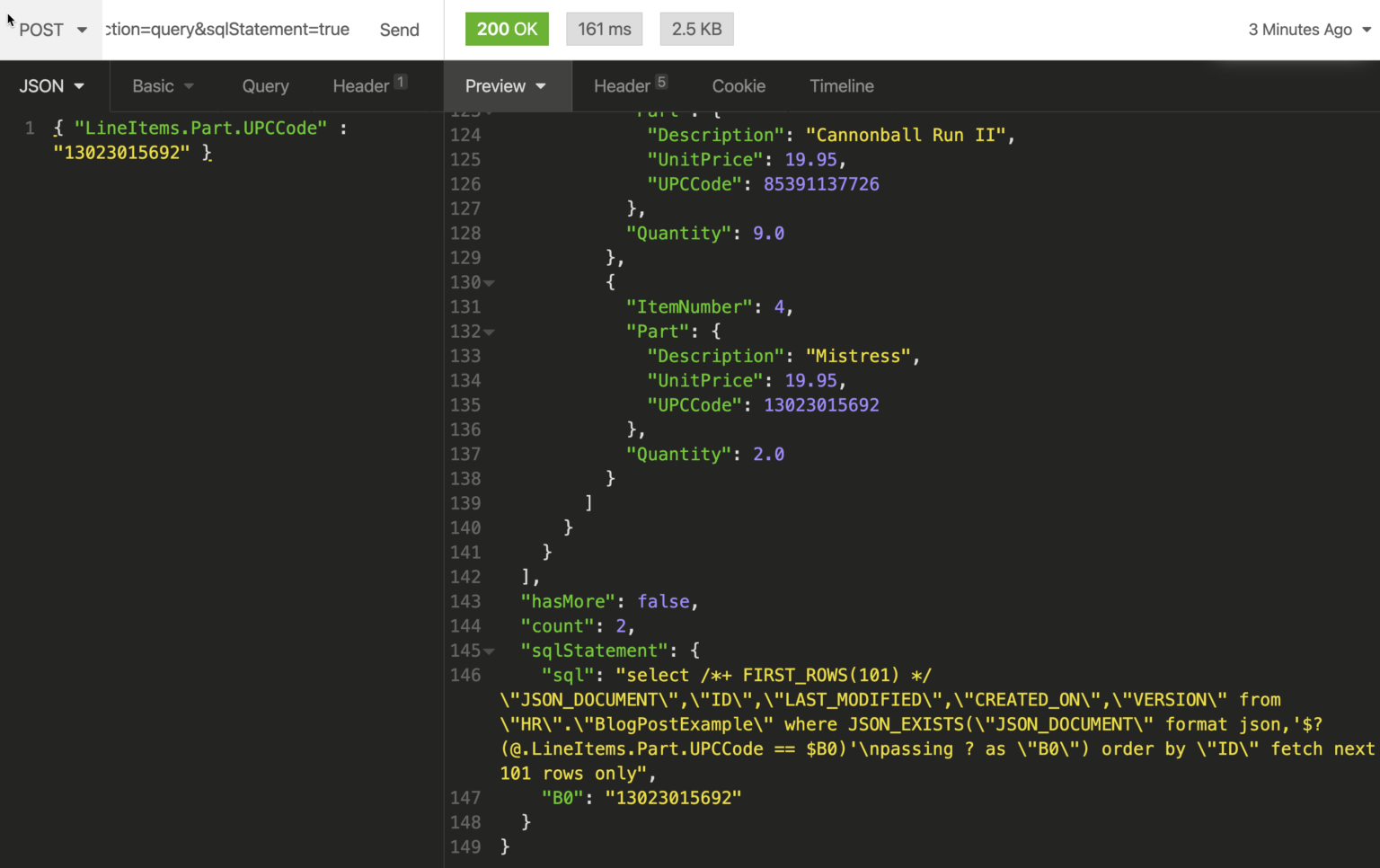

You can see how the experiment went in the video. Model single table with all 2nd level JSON objects as JSONB fields. Join tweets_avengers and tweets_got into one table, still in JSON. It actually has two JSON data types, json and jsonb. Save these 4M Tweets into a table, one for Avengers, one for Game of Thrones. PostgreSQL is an awesome database, with an awesome data type, JSON. CREATE INDEX ixhotelregions ON travel-sample (country, state, city) WHERE type'hotel' If we execute the same query again it should run in 7ms, note that in the new query plan there is. Save about 4M Tweets as JSON (Avengers and Game of Thrones). Max_connections = 200 shared_buffers = 4GB effective_cache_size = 12GB maintenance_work_mem = 1GB checkpoint_completion_target = 0.7 wal_buffers = 16MB default_statistics_target = 100 random_page_cost = 1.1 effective_io_concurrency = 200 work_mem = 5242kB min_wal_size = 1GB max_wal_size = 2GB max_worker_processes = 8 max_parallel_workers_per_gather = 4 max_parallel_workers = 8įigure out how Twitter streaming API works. The concat operator (||) is only top-level Max row size of 268 435 455 bytes (268.43 MB) NaN and infinity are not allowed null IS NOT NULL \u0000 is not allowed Experiment TimeĪs for the setup, I installed PostgreSQL 12 on Ubuntu 18.04 and ran Python scripts, each query 12 times, 4 concurrently. It is hard to optimize your query as there are no statistics within JSONB or JSON fields, because it’s considered to be just one column. You can interact with JSONB and the normal table columns within the same query. A few cool things about JSONB are that you can filter the data, index specific keys, index the whole thing (jsonb_path_ops), or add constraints.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed